Tomorrow's Clinical AI: A Taxonomy of Physician Needs

Abstract

As AI foundation labs shift from general-purpose models toward specialized clinical applications, aligning AI capabilities with real-world physician workflows represents one of the most promising frontiers for improving patient care. While current models perform exceptionally well on exam-style medical questions, physicians consistently highlight untapped opportunities for AI to deliver meaningful value in everyday clinical practice.

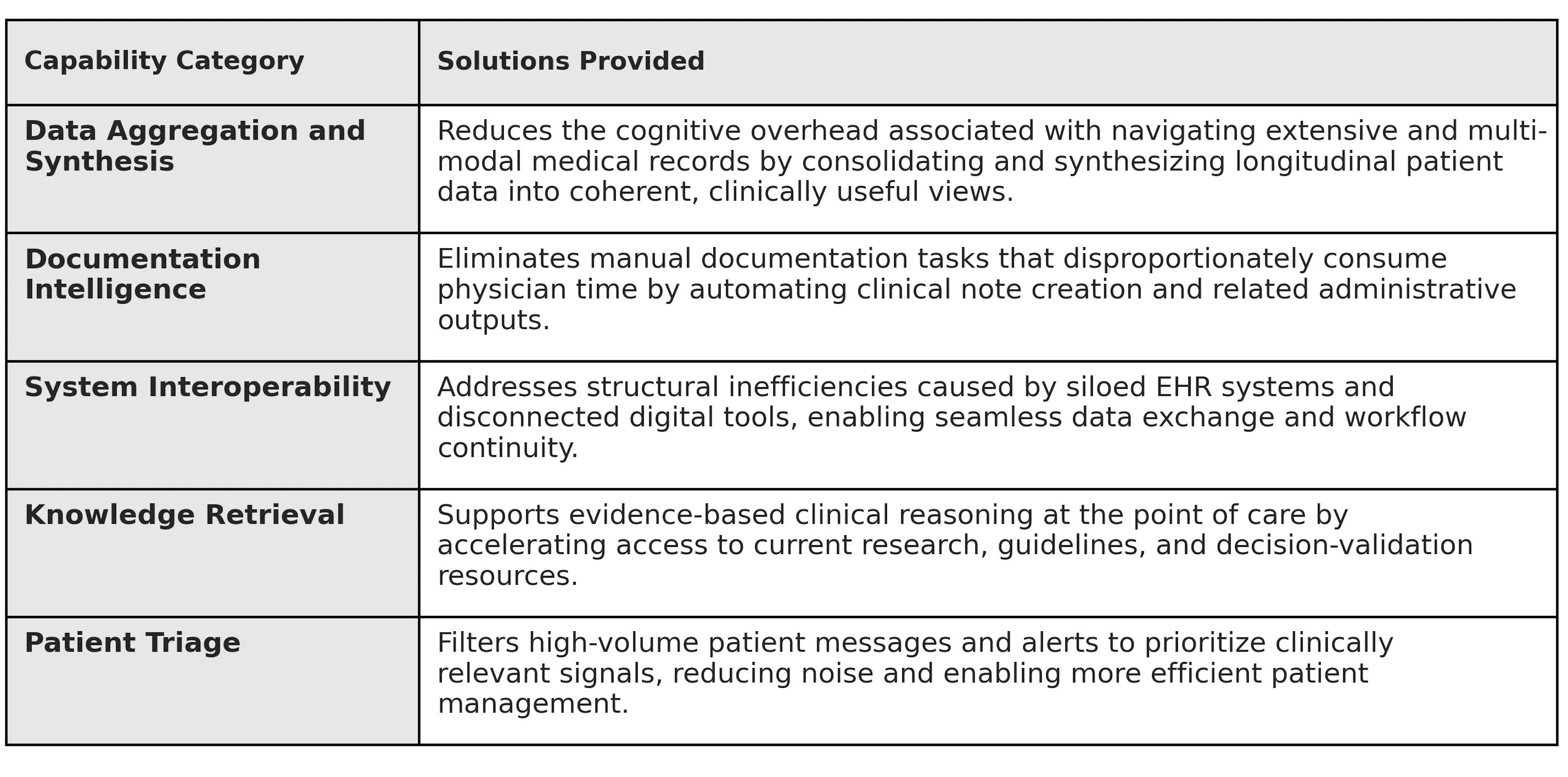

To explore this, we designed a multi-modal survey and gathered in-depth feedback from 50 physicians across the United States to identify the highest-impact opportunities for AI to transform physician workflows and improve patient outcomes. Our analysis synthesizes this feedback into five functional physician support domains: Aggregation, Generation, Interoperability, Knowledge Retrieval, and Triage. By deepening their understanding of clinician needs, desires, and concerns within these categories, foundation labs and AI companies can address the specific friction points preventing AI from becoming a core clinical utility for physicians.

Taken together, these findings highlight not only the limitations of current systems, but the extraordinary opportunity for clinical AI to reshape how physicians interact with medical information, make decisions, and deliver care.

Background and context

The physician respondents (see contributor list at the end of this piece) come primarily from academic medical centers, with a skew towards subspecialized and/or procedural practice settings. Respondents were invited to join this study based on their participation in Office Hours’ expert network. Many but not all have experience evaluating and training artificial intelligence models toward better clinical performance through Office Hours.

Physicians were first asked how much of their daily time they spend across six categories: clinical documentation and charting, diagnostic and clinical decision-making, inbox management and communication, research for patient care, surgery and planning, and prior authorization. The results show that documentation and clinical decision-making dominate physician workloads, making them some of the most promising areas where clinical AI could meaningfully improve efficiency and care delivery. A substantial proportion of respondents report spending more than 30%, and in many cases over 50%, of their day on documentation. Inbox management represents a consistent secondary burden, often consuming up to 30% of the day and contributing to fragmented, interruption-driven work. Research and surgical planning are high-intensity but concentrated among smaller subsets of physicians, reflecting specialty-specific demands. Prior authorization and insurance management, while typically occupying a smaller percentage of daily time per physician, remains a widely distributed burden across the population.

Overall, our data indicate that the greatest structural opportunities for AI to reclaim physician time and improve clinical focus—and therefore the highest-leverage targets for clinical AI—sit in documentation, decision support, and communication workflows.

These workload patterns directly inform where clinical AI must deliver meaningful value. Rather than treating AI as a generic productivity layer, our analysis maps physician time burdens to five functional domains where targeted capabilities can alleviate the most persistent friction.

I. Data Aggregation and Synthesis: The "Chart Distiller"

Modern electronic health records contain extraordinary volumes of patient data, but physicians are often required to manually synthesize this information across fragmented systems. This challenge represents a major opportunity for AI to act as a clinical “chart distiller,” transforming dispersed data into coherent, actionable insights for physicians. Today, physicians often describe a "digital chase," in which they must find and review extensive charts across multiple encounters to extract pertinent history—a time-intensive step when preparing for both outpatient visits and surgical operations. By dramatically reducing this cognitive overhead, clinical AI has the potential to return physicians’ attention to complex medical decision-making and patient connection.

The technical mandate for foundational labs is to solve for unstructured data extraction through specific, high-utility use cases. Physicians need tools that can automatically synthesize complex medical histories, such as generating preoperative and pre-visit briefings as well as comprehensive tumor board summaries. Currently, these tasks not only demand physicians parse through years of unstructured EHR notes, but also often manually collate scanned, non-searchable PDFs, pathology reports, and fragmented imaging results. As one surgeon noted, gathering data across disconnected systems is a firm prerequisite for safe surgical planning. Relieving physicians of this burden represents one of the most immediate opportunities for AI to improve both workflow efficiency and patient safety.

Currently, most AI tools available to physicians are incapable of this level of synthesis, forcing physicians to perform the high-friction search for critical data points themselves. While LLMs are proficient at summarizing a single, clean block of text, they fail at the complex cross-referencing required to track a patient’s journey through fragmented databases and across multi-modal data including imaging and laboratory results. For models to bridge this gap, they must pivot from "document summarizers" to "data synthesizers" capable of supporting complex clinical workflows like the tumor board, where the messy reality of medical records spanning multiple health systems, clinicians, and formats meets critical decision-making about a complex cancer patient’s treatment plan.

II. Documentation Intelligence: Eliminating the Manual Documentation Burden

Physicians consistently identify documentation as one of the most time-consuming aspects of modern practice—making it one of the clearest opportunities for AI to deliver immediate value. Beyond simple scribing, there is a high demand for the automated generation of documents such as HPIs and daily progress notes, which currently require doctors to manually re-type results and findings already residing elsewhere in the system. To combat this burden, many clinicians “copy forward” old notes, deleting and editing small sections to save time. While efficient in the short term, this practice can propagate outdated or incorrect information across the chart, contributing to documentation inaccuracies that can influence downstream clinical decision-making and erode trust among collaborating providers.

Most physicians in this study currently using ambient AI documentation tools report that these have meaningfully reduced portions of this burden. Clinicians frequently report time savings in pre-charting and in drafting assessment and plan sections, particularly for straightforward encounters. By eliminating the “blank page” problem and automating baseline transcription, these systems reduce cognitive load and allow physicians to engage more fully during patient visits. Evidence of time and mental bandwidth saved from the use of ambient scribes offers an early glimpse into how documentation intelligence can dramatically reduce clerical burden.

However, physicians consistently note that current systems underperform in more complex clinical contexts. Tasks such as synthesizing longitudinal history, interpreting laboratory trends, documenting nuanced physical exam findings, and extracting relevant past medical history from across the chart require cross-referencing structured and unstructured data over time. Because these functions extend beyond live transcription, clinicians often find themselves manually retrieving and integrating critical information, limiting true efficiency gains.

In addition to these limitations, physicians frequently raise concerns about errors of omission and commission. Many tools generate overly verbose notes that obscure clinically meaningful signals beneath excessive dialogue, requiring substantial editing to condense documentation to its most relevant elements. At the same time, these systems may omit key details or oversimplify complex discussions—such as reducing a lengthy shared decision-making conversation to a single line or misrepresenting the physician’s medical reasoning. This dual failure mode of over- and under-documentation is a significant barrier to trust, and for some clinicians, the resulting editing burden has led them to restrict or discontinue ambient scribe use.

For AI documentation systems to become truly transformative, development must address both documentation fidelity and personalization. Beyond reducing omission and commission errors, systems must also work toward “style mimicry”: the ability to ingest a physician’s historical notes and replicate their shorthand, clinical prioritization, structural preferences, and documentation voice. Physicians consistently report that without alignment to their documentation style and clinical reasoning patterns, time saved in transcription is partially reclaimed through heavy editing in order to meet their standards.

While ambient scribe tools show meaningful progress, the transition from “AI-generated draft” to “final clinical note” remains friction-filled. Until systems can reliably synthesize longitudinal data, appropriately weight clinical significance, and mirror individual physician style with high fidelity, they will function primarily as assistive drafting tools rather than true documentation intelligence platforms.

III. System Interoperability: Navigating Fragmented Architectures

The fragmented architecture of clinical software presents one of the most important opportunities for AI to create seamless clinical workflows. Physician workflows are defined by constant tab-switching, multiple logins, and navigation across external portals that do not communicate with the primary EHR. Clinical preparation becomes a manual aggregation practice rather than a cohesive workflow. One surgeon described preparing for a case as opening separate scheduling programs and EMR tabs, then manually abstracting and retyping data from pathology reports, operative notes, and radiology findings simply to generate a single surgical calendar entry. The next generation of clinical AI has the opportunity to move beyond being a separate destination and instead function as a native bridge across clinical systems.

The current lack of interoperability also creates delays in clinical decision support, particularly when using tools that exist outside the EHR. Determining patient disposition for high-acuity cases can be especially cumbersome despite involving simple, widely-adopted tools. One physician noted that manually adding up a PORT or a CURB-65 score to determine pneumonia severity using an external calculator is an inconvenient step that requires pulling lab results and medical history into a separate support tool. Delays in these calculations can stall critical admission decisions or lead to inappropriate decisions about where to transfer the patient for care. A seemingly “easy win” contribution of an AI tool would be to automatically incorporate those scores into the chart in real time, transforming the process from a manual, multi-step problem into an integrated support utility.

Ultimately, our results indicate that AI adoption will remain slow unless the technology moves toward a truly native-feeling experience for the physician. Any new feature that does not immediately decrease administrative friction or increase the quality of care will be rejected in favor of established systems, however flawed they may be. To achieve this level of utility, AI agents must be capable of executing long horizon tasks that span multiple platforms, from EMRs to clinical guideline sources to calendars, and can maintain high-fidelity persistent reasoning across the entire ecosystem of software that a physician touches daily. For the clinician, the goal is AI support that operates as a quiet, background utility, one that autonomously manages work across fragmented databases to ensure that the right data is in the right place at the moment that they need to serve a patient.

IV. Knowledge Retrieval: Evidence-Based Reasoning Support

Our physicians consistently described AI-powered knowledge retrieval as one of the most exciting opportunities for improving clinical decision-making, with many already leveraging physician-oriented LLMs like OpenEvidence. The manual process of looking up diagnostic guidelines or searching PubMed to navigate complex, undifferentiated cases is a significant time-drain. An ideal clinical AI would automatically surface differential diagnoses based on the specific patient history, supported by vetted medical literature and providing clear citations as well as an indication of evidence strength, while clearly indicating its degree of confidence and the limits of the available evidence. As one clinician described it, the ideal system would ingest age, history, physical exam findings, and imaging to generate a "differential menu" with supporting evidence from medical literature. This vision requires three essential features: a thorough differential ranked by likelihood, evidence-backed reasoning, and a concrete plan that includes potential further workup. By moving from a "blank page" to a structured menu of possibilities, AI transforms the diagnostic process from a manual research task into a high-level clinical review.

The demand for this level of automated precision becomes even more pronounced in specialized clinical research, particularly in oncology and rare disease. Clinical trial matching is consistently cited as an area with substantial unmet need, further reflected by extremely low rates of clinical trial enrollment, even in academic medical centers with robust research infrastructure. Determining trial eligibility requires parsing highly granular inclusion and exclusion criteria, reconciling them against heterogeneous and longitudinal patient data, and tracking ongoing protocol amendments or newly opened studies. Trial eligibility criteria are frequently written in semi-structured prose, include nested logical conditions, and depend on precise lab thresholds, prior lines of therapy, molecular markers, performance status scores, and timing windows. Many of these data points are buried in unstructured notes, pathology reports, or scanned documents. Currently, AI tools are seen as less efficient than human trial coordinators; they often miss studies or lack the necessary nuance to scour a patient’s deep longitudinal history. To close this gap, models must improve in their ability to screen inclusion/exclusion criteria against deep chart data with appropriate sensitivity, ensuring that viable treatment paths are not overlooked.

An ideal system for AI clinical trial matching would function as a continuous background monitor: ingesting molecular pathology results, updated imaging, changes in ECOG status, and evolving treatment history, then proactively alerting the care team when a patient newly qualifies for a trial. It would provide transparent reasoning, explicitly mapping each eligibility criterion to supporting data in the chart, so clinicians can audit its logic. Just as importantly, it would quantify uncertainty, flag missing data required for confirmation, and distinguish between definite, possible, and unlikely matches.

V. Patient Triage: Filtering the Noise

The physician inbox now contains a tremendous volume of patient communication, creating a clear opportunity for AI systems to intelligently prioritize and manage incoming requests. The physician’s inbox has become a source of "drowning" levels of data, yet the weight of this burden is often misunderstood as a purely quantitative issue. In reality, approximately 90-95% of incoming messages in a primary care setting are categorized as low-acuity, consisting of routine requests or questions regarding minor illnesses. These interactions are fatiguing to the clinician not because they are cognitively complex, but because they are relentlessly repetitive and time consuming. This constant stream of low-priority but must-answer inquiries, such as requests for prescription refills or confirmation of normal lab results, consume limited temporal resources and cognitive bandwidth and lead to a progressive "empathy fatigue," where the clinician’s mental energy is drained by clerical repetition before they can address complex patient needs.

This administrative burden is exacerbated by a structural triage gap within current healthcare delivery. Because the first point of patient contact is typically a medical receptionist or scheduler with no formal clinical training, the system is prone to “semantic drift,” where vocabulary used by non-clinical staff or basic keyword-based filters misrepresents the clinical reality. For example, a patient’s report of shortness of breath might be flagged as an emergency when it is merely a lingering wheeze, or heartburn might be overlooked when it actually indicates a cardiac event. This drift often leads to a misallocation of resources, where non-critical patients are scheduled into high-urgency time slots while urgent cases may be delayed. AI systems have the potential to evolve from passive intake mechanisms into intelligent triage agents capable of safely prioritizing care. However, for this to be effective, the AI must be exceedingly accurate; If the system routinely over-escalates low-acuity cases, it distorts clinical prioritization, consumes limited physician bandwidth, and compresses the time available for patients with truly urgent needs. Effective AI triage must simultaneously protect patient safety and preserve system efficiency, directing clinical resources toward the right patients at the right time without overwhelming the infrastructure designed to deliver care.

To effectively "clear the confusion" for the physician, the foundational requirement for clinical AI is a shift from passive data observation to proactive clinical assessment. This mimics the function of a telehealth nurse: the ability to ask targeted questions to collect critical information, acknowledge and address ambiguity, note "yellow and red flag" symptoms, and set appropriate next steps based on a real-time assessment of the patient’s status. When this proactive triage is paired with an analysis of longitudinal laboratory results, rather than isolated snapshots of patient symptoms, the system can accurately distinguish between predictable side effects that are a known part of the treatment and new, dangerous complications that require a doctor’s immediate attention. By autonomously resolving the majority of low-complexity requests through high-fidelity clinical judgment, the AI tool ensures that a physician’s primary cognitive resources are reserved for the critical portion of cases that demand specialized medical expertise.

Summary and Implications

The next wave of clinical AI innovation will not be defined by benchmark performance alone, but by systems that meaningfully improve everyday clinical workflows and patient care. It will be earned by tools that eliminate the abundance of structural friction embedded in everyday clinical workflows, and lost the moment those tools introduce new cognitive burdens or patient safety risks. This study identifies five domains where AI has the highest-leverage opportunity to deliver that value: Data Aggregation, Documentation Intelligence, System Interoperability, Knowledge Retrieval, and Patient Triage.

For foundation labs and clinical AI developers, each domain points to a distinct and addressable gap. On aggregation, the priority is true cross-system data synthesis, such as the kind required for multi-disciplinary patient review meetings, preoperative prep, and longitudinal treatment review across fragmented records. On documentation, ambient scribe tools have made meaningful progress, but the gap between AI-generated draft and final clinical note remains wide; closing it requires better handling of complex clinical context, reduced omission and commission errors, and style personalization at the physician level. On interoperability, AI must operate at a high-level as a native background utility spanning EMRs, external portals, and clinical decision tools without adding steps to existing workflows. On knowledge retrieval, the opportunity is at the point of care: real-time differential support with cited, evidence-graded reasoning, and continuous clinical trial matching that proactively surfaces eligibility. On triage, physicians need AI tools capable of proactive patient assessment that resolves low-acuity requests without increasing noise or eroding the prioritization of urgent cases.

Our report found that physicians are eager for AI systems that remove workflow friction, synthesize complex clinical data, and ultimately allow them to spend more time on the work that matters most: delivering high-quality care to their patients.

Cohort Characteristics

Institutions Represented

UCLA Health • Yale School of Medicine • MD Anderson Cancer Center • Mayo Clinic • Cleveland Clinic • NYU Langone Health • Duke Health • University of Chicago Medicine • UT Southwestern • Moffitt Cancer Center • Fox Chase Cancer Center • Tufts Medical Center • Barnes-Jewish Hospital • UPMC • Montefiore Health System • Henry Ford Health • NYU Tisch • NYC Health + Hospitals • Cook Children’s • Unity Health Network • UMC of Southern Nevada • St. Cloud Hospital • University of Mississippi Medical Center • University of Illinois Hospital • Mercy Hospital Joplin • WVU Community Cancer Center • Boston Medical Center

Specialties Represented

Urologic Oncology • Transplant Surgery • Orthopedic Surgery • Endocrine Surgery • Facial Plastic Surgery • Urology • Medical Oncology • Hematology • Cutaneous Oncology • Pediatric Hematology-Oncology • Pediatrics Infectious Diseases • Emergency Medicine • Pathology • Internal Medicine • Nephrology • Obstetrics and Gynecology • Neurology

Related Research

Holmes, John H et al. “Why Is the Electronic Health Record So Challenging for Research and Clinical Care?.” Methods of information in medicine vol. 60,1-02 (2021): 32-48. doi:10.1055/s-0041-1731784

Upadhyay, Soumya, and Han-Fen Hu. “A Qualitative Analysis of the Impact of Electronic Health Records (EHR) on Healthcare Quality and Safety: Clinicians' Lived Experiences.” Health services insights vol. 15 11786329211070722. 3 Mar. 2022, doi:10.1177/11786329211070722

Senathirajah, Yalini et al. “Characterizing and Visualizing Display and Task Fragmentation in the Electronic Health Record: Mixed Methods Design.” JMIR human factors vol. 7,4 e18484. 21 Oct. 2020, doi:10.2196/18484